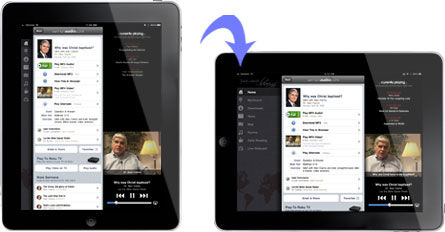

A tablet computer switching the orientation of the screen, maps orienting themselves with the user’s current orientation and adapting the zoom level to the current speed, and switching on the backlight of the phone when used in the dark are examples of computers that are aware of their environment and their context of use. Less than 10 years ago, such functions were not common and existed only on prototype devices in research labs working on context-aware computing. Author/Copyright holder: SermonAudio.com. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below. Figure 14.1: An iPad switching orientation of the screen is a good example of context-aware computing

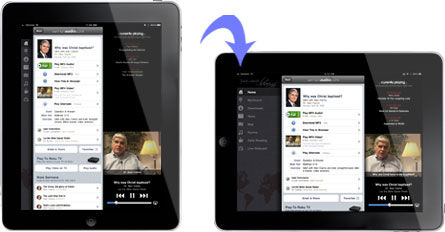

When we aim to create applications, devices, and systems that are easy to use, it is essential to understand the context of use. With context-aware computing, we now have the means of considering the situation of use not only in the design process, but in real time while the device is in use. In Human-Computer Interaction (HCI), we traditionally aim to understand the user and the context of use and create designs that support the major anticipated use cases and situations of use. In Context-Aware Computing on the other hand, making use of context causes a fundamental change: We can support more than one context of use that are equally optimal. At runtime – when the user interacts with the application — the system can decide what the current context of use is and provide a user interface specifically optimized for this context. With context-awareness, the job of designing the user interface typically becomes more complex as the number of situations and contexts which the system will be used in usually increases. In contrast to traditional systems, we do not design for a single -or a limited set - of contexts of use; Instead, design for several contexts. The advantage of this approach is that we can provide optimized user interfaces for a range of contexts. Let us assume the following example: You are asked to design a user interface for a wrist watch. In your research you find out that people will use it indoors and outdoors, they will use it in the dark as well as in sunlight, they will use it when they run to catch the train as well as when they sit in a lecture and are bored. As a good user interface designer, you end up with many ideas for an exciting user interface for each situation: For example, when the user is running to catch the train, the user interface should show the time highlighting the minutes and seconds in a very large font. On the other hand, when the user is attending a lecture the user interface should show the time in a very small font, and additionally provide a funny quote. In a traditional design process, you would realize – after creating your sketches and design briefs – that you have to decide which one of your ideas for a user interface you want to use. You would realize that supporting all the situations in a single design will not work. The typical result is a compromise – which often loses much of the edge of the ideas you initially came up with. However, if you take the approach of Context-Aware Computing, you could create a context-aware watch, where you combine all your situation-optimized designs in a single design. If you designed your watch so that it could recognize each of the situations that you had found in your initial research (e.g. running to catch the train, attending a lecture, etc), your watch could reconfigure itself based on the recognized context. Figure 2 shows a design sketch for a context-aware watch. Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.2: Design sketches that illustrate ideas for time visualizations in different contexts. Left: for users that run to catch the train; making it easy to see the minutes. Middle: a time visualization for boring lectures and meetings; shows a count down to the end, and some information to engage the user. Right: visualization that gives only a very coarse idea of the time, similar to the information you get from the sun, e.g. for hanging out with friends when time does not matter. With context-awareness, you could create a product that combines all three visualizations and choose the most appropriate one according to the recognized context. The example shows the great advantage of context-aware computing systems as the freedom of design is increased, but at the same time systems become more complex and often more difficult to design and implement. In this chapter, we introduce the basics for creating context-aware applications and discuss how these insights may help design systems that are easier and more pleasant to use. Author/Copyright holder: Courtesy of Albrecht Schmidt. Copyright terms and licence: CC-Att-ND (Creative Commons Attribution-NoDerivs 3.0 Unported). What is Context? Author/Copyright holder: Courtesy of Albrecht Schmidt. Copyright terms and licence: CC-Att-ND (Creative Commons Attribution-NoDerivs 3.0 Unported). Advice, Criticisms and Business Value Author/Copyright holder: Courtesy of Albrecht Schmidt. Copyright terms and licence: CC-Att-ND (Creative Commons Attribution-NoDerivs 3.0 Unported). Guidelines for Designing Products using Context Aware Computing Author/Copyright holder: Courtesy of Albrecht Schmidt. Copyright terms and licence: CC-Att-ND (Creative Commons Attribution-NoDerivs 3.0 Unported). Current Challenges and the Future of Context Aware Computing

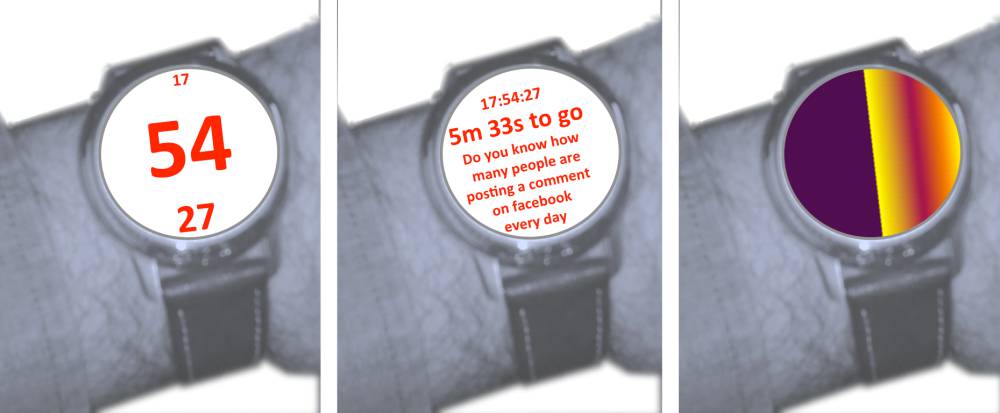

In the early days of computing, the context in which systems were used was strongly defined by the place in which computers were set up, see Figure 3. Personal computers were used in office environments or on factory floors. The context of use did not change much, and there was little variance in the situations surrounding the computer. Hence, there was no need to adapt to different environments. Many traditional methods in the discipline of Human-Computer Interaction (HCI), such as contextual inquiry or task analysis, have their origin in this period and are most easy to use in situations that do not constantly change. With the rise of mobile computers and ubiquitous computing, this changed. Users take computers with them and use them in many different situations, see Figure 4. At the beginning of the mobile computing era, in the late 80s and 90s, the central theme was how to make mobility transparent for the user, automatically providing the same service everywhere. Here, transparent meant that the user did not have to care about changes in the environment and could rely on the same functionality independent of the environment. In the early 90s, research into ubiquitous computing at Xerox PARC caused a shift in thinking. In addition to making functionality transparent, such as providing network connectivity throughout a campus without the user realizing the hand-over between different networks, researchers discovered the potential to exploit the context of use as a resource to which systems can be adapted. In his 1994 paper at the Workshop on Mobile Computing Systems and Applications (WMCSA), Bill Schilit introduces the concept of context-aware computing and describes it as follows:

Such context-aware software adapts according to the location of use, the collection of nearby people, hosts, and accessible devices, as well as to changes to such things over time. A system with these capabilities can examine the computing environment and react to changes to the environment.

The basic ideas is that mobile devices can provide different services in different contexts – where context is strongly related to the location of a device. Much of the initial research of context-aware computing hence focused on location-aware systems. In this sense, the widely-used satellite navigation systems in cars today are context-aware systems. However, context is more than location, as we argue in (Schmidt et al 1999) and throughout this chapter.

Author/Copyright holder: Courtesy of Library of Virginia. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).

Author/Copyright holder: Courtesy of Library of Virginia. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).  Author/Copyright holder: Courtesy of Library of Virginia. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).

Author/Copyright holder: Courtesy of Library of Virginia. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).  Author/Copyright holder: Courtesy of The Library of the London School of Economics and Political Science. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)). Figure 14.3 A-B-C: In the early days of computing, the context was defined by the computer as the computer was the actual workplace. Later, computers were set up in a specific location to help with a specific task, and hence the context was strongly defined by the location of the computer. Personal computers were used in office environments or on factory floors.

Author/Copyright holder: Courtesy of The Library of the London School of Economics and Political Science. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)). Figure 14.3 A-B-C: In the early days of computing, the context was defined by the computer as the computer was the actual workplace. Later, computers were set up in a specific location to help with a specific task, and hence the context was strongly defined by the location of the computer. Personal computers were used in office environments or on factory floors.  Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.  Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.4 A-B: Even in the early days of mobile computing, where notebook computers were considered mobile devices, users could choose the context in which to work. With the rise of mobile and handheld computers and ubiquitous computing, this changed even more radically. Users take computers with them and use them in many different situations in the real world. Next time you go for a walk, observe the multitude of mobile usage scenarios and you will be surprised what people do with their devices

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.4 A-B: Even in the early days of mobile computing, where notebook computers were considered mobile devices, users could choose the context in which to work. With the rise of mobile and handheld computers and ubiquitous computing, this changed even more radically. Users take computers with them and use them in many different situations in the real world. Next time you go for a walk, observe the multitude of mobile usage scenarios and you will be surprised what people do with their devices

In a Satellite Navigation System (SatNav), the current location is the primary contextual parameter that is used to automatically adjust the visualization (e.g. map, arrows, directions…) to the user’s current location. An example is shown in Figure 5. However, looking at current commercial systems, much more context information is used and much of visualization has been changed. In addition to the current GPS position, contextual parameters may include the time of day, light conditions, the traffic situation on the calculated route or the user’s preferred places. Beyond the visualization and whether or not to switch on the backlight, the calculated route can be influenced by context, e.g. to avoid potentially busy streets at that time of day.

Author/Copyright holder: Courtesy of Eirik Solheim Copyright terms and licence: CC-Att-SA-2 (Creative Commons Attribution-ShareAlike 2.0 Unported).

Author/Copyright holder: Courtesy of Eirik Solheim Copyright terms and licence: CC-Att-SA-2 (Creative Commons Attribution-ShareAlike 2.0 Unported).  Author/Copyright holder: Courtesy of Satish Krishnamurthy. Copyright terms and licence: CC-Att-2 (Creative Commons Attribution 2.0 Unported). Figure 14.5 A-B: Navigation devices have become common and are widely used in cars and by pedestrians. Figure 4a shows a TomTom navigation application on a Nokia N95 device. Figure 4B shows Google Maps on another Nokia device. SatNavs are probably the most widespread context-aware computing systems

Author/Copyright holder: Courtesy of Satish Krishnamurthy. Copyright terms and licence: CC-Att-2 (Creative Commons Attribution 2.0 Unported). Figure 14.5 A-B: Navigation devices have become common and are widely used in cars and by pedestrians. Figure 4a shows a TomTom navigation application on a Nokia N95 device. Figure 4B shows Google Maps on another Nokia device. SatNavs are probably the most widespread context-aware computing systems

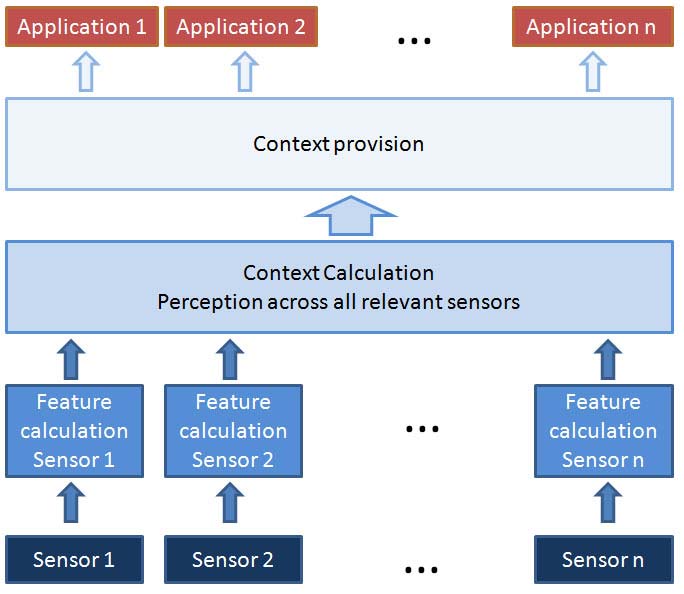

At house entrances and in hotel hallways automatic lights have become common. These systems can also be seen as simple context-aware systems. The contextual parameters taken into account are the current light conditions and if there is motion in the vicinity. The adaptation mechanism is fairly simple. If the situation detected is that it is dark and that there is someone moving, the light will be switched on. The light will then be on as long as the person moves, and after a period where no motion is detected, the light will switch off again. Similarly, the light will switch off if it is not dark anymore. These simple examples outline the basic principle of a context-aware system. In Figure 6 a reference architecture for context-aware computing systems is shown. Sensors provide data about activities and events in the real world. Perception algorithms will make sense of these stimuli and classify the situations into context. Based on the observed context, actions of the system will be triggered. Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.6: The drawing depicts a reference architecture for context-aware computing systems. It assumes the acquisition of data from sensors support to contextual behavior of multiple applications

The notion of context-awareness is closely related to the vision of ubiquitous computing, as introduced by Mark Weiser (see Figure 7) in his seminal paper in the journal Scientific American. As computers become a part of everyday life, it is essential that they are easy to use. This is highlighted by the following statement.

The most profound technologies are those that disappear. They weave themselves into the fabric of everyday life until they are indistinguishable from it.

Author/Copyright holder: Mark Weiser. Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice. Figure 14.7: Mark Weiser stated the vision of ubiquitous computing. Context-awareness is an essential building block for realizing this vision Many people regard this level of integration of computing technologies as the ultimate goal for computers. In such a situation, technologies would not require much active attention by the user, and would be ready to use at a glance. If this is achieved, computers disappear – not in a technical sense, but from a psychological perspective.

In essence, that only when things disappear in this way are we freed to use them without thinking.

To realize such ubiquitous computing systems with optimal usability, i.e. transparency of use, context-aware behaviour is seen as the key enabling factor. Computers already pervade our everyday life - in our phones, fridges, TVs, toasters, alarm clocks, watches, etc - but to fully disappear, as in the Weiser's vision of ubiquitous computing, they have to anticipate the user’s needs in a particular situation and act proactively to provide appropriate assistance. This capability require means to be aware of its surroundings, i.e. context-awareness. There are many examples of computing systems that are so well implemented that users are not aware that they have interacted with them. Cars are a prime example: ABS (anti-lock braking system) and ESP (Electronic Stability Program) are integrated in cars and influence their usage in extreme situations. Nevertheless, most people will not consciously be thinking of these technologies when operating a car. These technologies are ubiquitous and have disappeared from the user’s conscious mind.

The term context is widely used with very different meanings. The following definitions from the dictionary, as well as the synonyms, provide a basic understanding of the meaning of context. context, noun. Cause of event

the situation within which something exists or happens, and that can help explain it

Cambridge Dictionary

Synonyms for context: circumstance, situation, phase, position, posture, attitude, place, point, terms, regime, footing, standing, status, occasion, surroundings, environment, location, dependence. Context-aware computing literature also has several definitions and explanations of what context is. The following are prominent examples that highlight the basic understanding shared in the community. At the University of Kent, research was conducted that looked at how mobile devices with GPS (externally connected), network access, and further sensors can be used to support the fieldwork of mobile workers. The research team suggested the following definition:

[…] 'context awareness', a term that describes the ability of the computer to sense and act upon information about its environment, such as location, time, temperature or user identity. This information can be used not only to tag information as it is collected in the field, but also to enable selective responses such as triggering alarms or retrieving information relevant to the task at hand.

Author/Copyright holder: Courtesy of Tom Oates. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 14.8: Lancaster castle is one of the locations that was featured in the GUIDE tourist system. The GUIDE project (GUIDE 2001) at Lancaster University was the first larger and public installation of a research prototype to explore context-awareness in the domain of tourism. It focused on how context can be used to advance a mobile information system for visitors to the historic town of Lancaster. Keith Mitchell suggests the following notion of context in his thesis, based on work with the GUIDE system:

[…] two classes of context were identified, namely personal and environmental context. […]. Examples of environmental context include: the time of day, the opening times of attractions and the current weather forecast.

This is an understanding of context where the users themselves are part of the context (e.g. profiles, preferences). Technically, GUIDE followed an interesting approach as it used a modified browser in which context information was used in the background to adapt content and presentation. Anind Dey has suggested a very generic description of what constitutes context:

Context is any information that can be used to characterize the situation of an entity. An entity is a person, place, or object that is considered relevant to the interaction between a user and an application, including the user and application themselves.

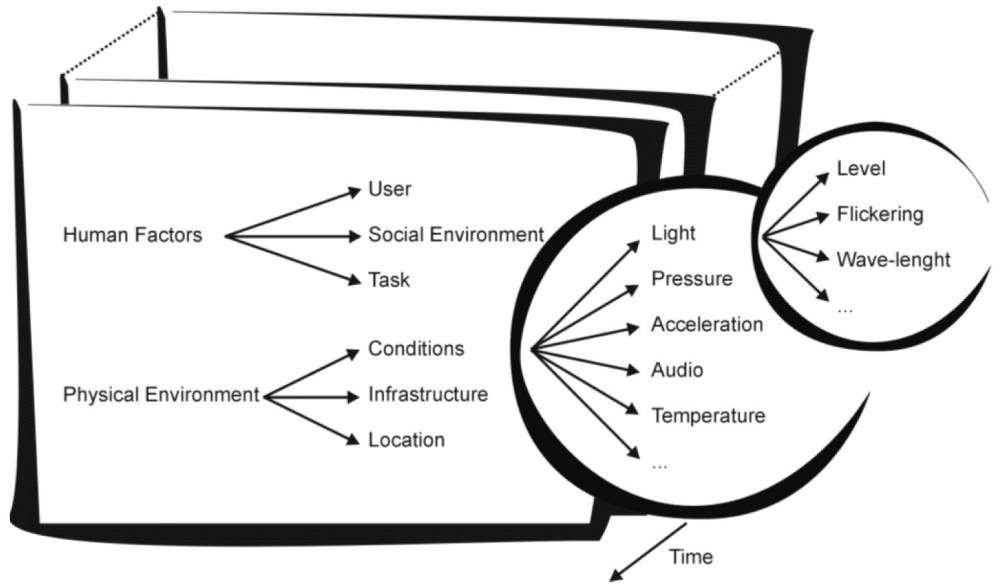

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.9: This traditional board advertises the same product to all people who pass by - home made soup with bread. In the future such boards will be replaced with digital screens and then it becomes possible to make the content adapt to the current context. If you are interested in the future visions of public display networks have a look at pd-net.org For practical purposes, context is often hierarchically structured describing the relevant features. The feature space described by myself (Schmidt et al 1999) is an example of such a structured representation of context, see Figure 10. Let us assume you want to design a digital replacement of a menu you find often at the entrance of a restaurant (see Figure 9 for an example). If you have a non context-aware version, this would typically show the special of the day. Instead, if you designed it as context-aware, you would want to have different suggestions on the menu depending on who is walking past it. If parents with children walk by, you would show the family-oriented menu; if a couple is looking at it in the evening, you would show the menu for a candle light dinner; and if it is hot and sunny in the afternoon, you would advertise the selection of ice cream you have. A feature space for this application could include the people looking at it, the time of day, and the weather. People could be refined to number of people, age, and gender. The weather could include temperature and whether it rains or not. By providing such a structured space, it becomes easier to link contexts in the real world to adaptations in the system. Try as an example to do a full feature space for the menu and define appropriate adaptations. Even a checklist could be considered as a very simple example of a non-hierarchical feature space. There is no feature space that is complete and describes all possible options – such a feature space would in fact be an attempt to provide a complete description of the world. The usual approach is to create a feature space for the specific context-aware application that is designed. The advantage of a feature space is that by looking at a set of parameters, it can be easily determined if a situation matches a context or not.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.9: This traditional board advertises the same product to all people who pass by - home made soup with bread. In the future such boards will be replaced with digital screens and then it becomes possible to make the content adapt to the current context. If you are interested in the future visions of public display networks have a look at pd-net.org For practical purposes, context is often hierarchically structured describing the relevant features. The feature space described by myself (Schmidt et al 1999) is an example of such a structured representation of context, see Figure 10. Let us assume you want to design a digital replacement of a menu you find often at the entrance of a restaurant (see Figure 9 for an example). If you have a non context-aware version, this would typically show the special of the day. Instead, if you designed it as context-aware, you would want to have different suggestions on the menu depending on who is walking past it. If parents with children walk by, you would show the family-oriented menu; if a couple is looking at it in the evening, you would show the menu for a candle light dinner; and if it is hot and sunny in the afternoon, you would advertise the selection of ice cream you have. A feature space for this application could include the people looking at it, the time of day, and the weather. People could be refined to number of people, age, and gender. The weather could include temperature and whether it rains or not. By providing such a structured space, it becomes easier to link contexts in the real world to adaptations in the system. Try as an example to do a full feature space for the menu and define appropriate adaptations. Even a checklist could be considered as a very simple example of a non-hierarchical feature space. There is no feature space that is complete and describes all possible options – such a feature space would in fact be an attempt to provide a complete description of the world. The usual approach is to create a feature space for the specific context-aware application that is designed. The advantage of a feature space is that by looking at a set of parameters, it can be easily determined if a situation matches a context or not.  the physical environment" />Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.10: Context feature space, detailing light as one feature in the conditions of the physical environment Design Hint 1: When building a context-aware system, first create a (hierarchical) feature space with factors that will influence the system behaviour Knowing which are the factors that should influence the system behaviour, one can start to look at how these factors can be determined in the devices. In many cases this will require sensors that allow the provision of context.

the physical environment" />Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.10: Context feature space, detailing light as one feature in the conditions of the physical environment Design Hint 1: When building a context-aware system, first create a (hierarchical) feature space with factors that will influence the system behaviour Knowing which are the factors that should influence the system behaviour, one can start to look at how these factors can be determined in the devices. In many cases this will require sensors that allow the provision of context.

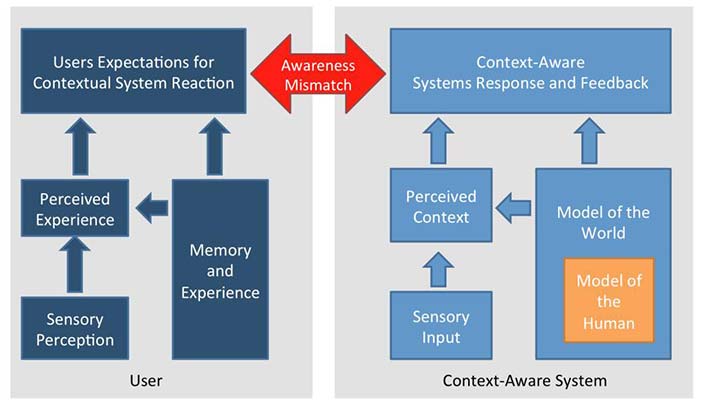

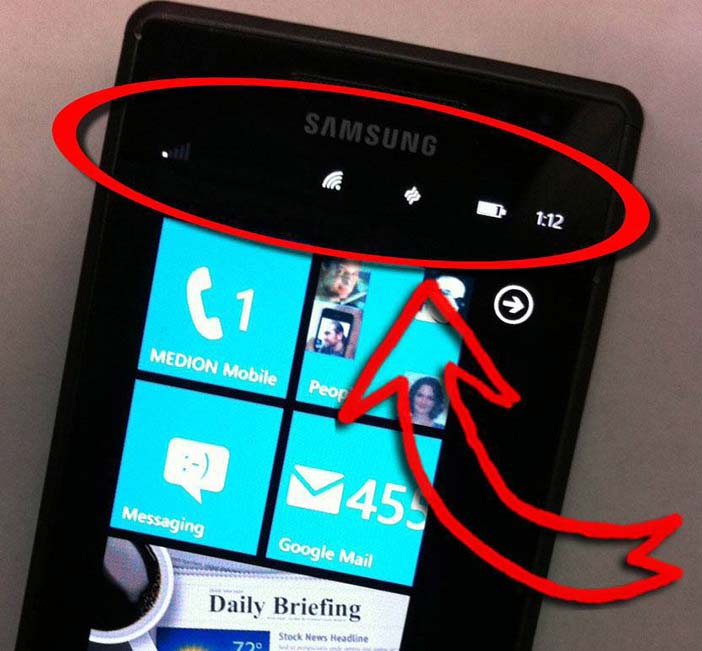

The ultimate goal of a context-aware system is for the system to arrive at a representation of the surrounding world that is close to the perception of the user. An important question is how to narrow the gap between the user’s and the system’s perception (or understanding) of the real world. For location, different means of sensing (e.g. GPS) and interpretation (World Geodetic System, WSG84, post code) are well-established. However, for many other sensors there is typically no single and well-understood way for interpreting the sensed information. The user’s perception of the surroundings is based on human senses, but relates at the same time to experience and memory. Human perception is multifaceted. When walking home from the bus stop late in the evening, a user may perceive that it is dark, quiet, and cold, but at the same time he may perceive the situation as scary. Another user, who was busy the whole day and surrounded by people, may perceive the situation also as dark, quiet, and cold, but at the same time as relaxing and free. This example shows that relying on sensor data alone does not provide the complete picture. It is important to remember that even a perfect design and implementation will not be able to perceive the environment in exactly the same way as the user does. We now have the following ingredients: The user’s perception and the user’s experience which both lead to the user’s expectations; the system’s perceived context drawing from the sensor input; the system’s model of the world including a model of the user driving the system’s reaction. See Figure 5. The main goal of a good and usable design should be to minimize the "awareness mismatch", as illustrated in Figure 11.  Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.11: The shown User-Context Perception Model (UCPM) highlights the parallel perception processes in the user and in the system. If they are different we create systems with an awareness mismatch, where the system behaviour does not correspond with the users’ expectations. The User-Context Perception Model (UCPM) is a model created to help the designer understand the challenge he faces in creating context-aware systems. It does not describe the way humans work, nor does it prescribe how to implement the system. Nevertheless, the model of a context-aware system, as shown in the model, can provide a good starting point for designing the system architecture of context-aware applications. By considering the example of a car navigation system, we can look a bit more into the details of the UCPM. If you use a navigation system, you will probably have noticed that it works very well if you are in a city you have never been to before. If you use it around the area where you live, you may, however, sometimes be surprised about the route it tries to direct you to. This phenomenon can be explained with the UCPM. Let’s assume the context-aware system (right side in Figure 11) is of equal quality in both locations; This means that the difference must be on the user side. The sensory perception (e.g. visual matching of buildings and places you know based on your eyesight) is different in a familiar place and a new place. For the new place, you lack reference points, and the Memory and Experience part in the model differs significantly. In the familiar environment, you will have expectations about which route to take and which way would be a good choice. In the unfamiliar environment, you lack experience and reference points, and hence your expectation is simply that the system will guide you to your destination. The result is that a navigation system that successfully guides you to your destination with a non-optimal route will satisfy your expectations in an unfamiliar environment, but be frowned upon in a familiar environment. A non-optimal route could include taking a detour of a few blocks because the map is out of date, or having to wait at three traffic lights, where you could have alternatively used the slightly longer way over the bridge without traffic lights. In the familiar environment, we have a substantial awareness mismatch, whereas when navigating in new surroundings, we have a minimal awareness mismatch. Design Hint 2: In the user interface, provide information about the sensory information that is used to determine the context in order to minimize the awareness mismatch. The quality of context-aware systems, as perceived by the user, is directly related to the awareness mismatch, and a good design aims at designing systems with minimal awareness mismatch. A prerequisite for creating a minimal awareness mismatch is that the user understands what factors have an influence on the system. In the example of a simplistic car navigation system, this factor is only current location and nothing else. In such a case the user knows that the system's reactions are based purely on the current location as well as the destination, and the user may attribute the system's response to these factors. In cases where further parameters play a role - e.g. a navigation system that takes traffic into account - it may be more difficult for the user to understand the causalities behind the system's behaviour. In such an example, the navigation system may suggest different routes in the morning and the evening as the traffic situation is not the same. If the user has no knowledge that the system makes the routing suggestions based on the current location and the traffic situation, it is likely that the user will have a hard time understanding what the system does. As an important rule in the design of context-aware systems, the user should be made aware of the sensory information that the system uses. There are many examples of devices and applications that provide such feedback, e.g. the type of wireless connectivity in a mobile phone and the symbol for GPS reception. Such hints are essential for the user to understand system behaviour. For example, the user may understand, and accept, that there are significant differences in download speed on a mobile device when supplied with the information that download in some cases happen over the GSM network and in other cases over a WiFi connection. However, if the user has no concept of the difference between a data connection over GSM and WiFi, all this information will not be of much help, and the awareness mismatch remains. Therefore, design hints such as the above-mentioned Design Hint 2 are not absolute rules and do not exempt the designer from doing user studies and usability tests: Know thy user, as a popular one-liner goes.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.11: The shown User-Context Perception Model (UCPM) highlights the parallel perception processes in the user and in the system. If they are different we create systems with an awareness mismatch, where the system behaviour does not correspond with the users’ expectations. The User-Context Perception Model (UCPM) is a model created to help the designer understand the challenge he faces in creating context-aware systems. It does not describe the way humans work, nor does it prescribe how to implement the system. Nevertheless, the model of a context-aware system, as shown in the model, can provide a good starting point for designing the system architecture of context-aware applications. By considering the example of a car navigation system, we can look a bit more into the details of the UCPM. If you use a navigation system, you will probably have noticed that it works very well if you are in a city you have never been to before. If you use it around the area where you live, you may, however, sometimes be surprised about the route it tries to direct you to. This phenomenon can be explained with the UCPM. Let’s assume the context-aware system (right side in Figure 11) is of equal quality in both locations; This means that the difference must be on the user side. The sensory perception (e.g. visual matching of buildings and places you know based on your eyesight) is different in a familiar place and a new place. For the new place, you lack reference points, and the Memory and Experience part in the model differs significantly. In the familiar environment, you will have expectations about which route to take and which way would be a good choice. In the unfamiliar environment, you lack experience and reference points, and hence your expectation is simply that the system will guide you to your destination. The result is that a navigation system that successfully guides you to your destination with a non-optimal route will satisfy your expectations in an unfamiliar environment, but be frowned upon in a familiar environment. A non-optimal route could include taking a detour of a few blocks because the map is out of date, or having to wait at three traffic lights, where you could have alternatively used the slightly longer way over the bridge without traffic lights. In the familiar environment, we have a substantial awareness mismatch, whereas when navigating in new surroundings, we have a minimal awareness mismatch. Design Hint 2: In the user interface, provide information about the sensory information that is used to determine the context in order to minimize the awareness mismatch. The quality of context-aware systems, as perceived by the user, is directly related to the awareness mismatch, and a good design aims at designing systems with minimal awareness mismatch. A prerequisite for creating a minimal awareness mismatch is that the user understands what factors have an influence on the system. In the example of a simplistic car navigation system, this factor is only current location and nothing else. In such a case the user knows that the system's reactions are based purely on the current location as well as the destination, and the user may attribute the system's response to these factors. In cases where further parameters play a role - e.g. a navigation system that takes traffic into account - it may be more difficult for the user to understand the causalities behind the system's behaviour. In such an example, the navigation system may suggest different routes in the morning and the evening as the traffic situation is not the same. If the user has no knowledge that the system makes the routing suggestions based on the current location and the traffic situation, it is likely that the user will have a hard time understanding what the system does. As an important rule in the design of context-aware systems, the user should be made aware of the sensory information that the system uses. There are many examples of devices and applications that provide such feedback, e.g. the type of wireless connectivity in a mobile phone and the symbol for GPS reception. Such hints are essential for the user to understand system behaviour. For example, the user may understand, and accept, that there are significant differences in download speed on a mobile device when supplied with the information that download in some cases happen over the GSM network and in other cases over a WiFi connection. However, if the user has no concept of the difference between a data connection over GSM and WiFi, all this information will not be of much help, and the awareness mismatch remains. Therefore, design hints such as the above-mentioned Design Hint 2 are not absolute rules and do not exempt the designer from doing user studies and usability tests: Know thy user, as a popular one-liner goes.  Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.12: Phones provide very simple context-information in the user interface. In this picture the phone has connectivity to the GSM-network as well as to a WiFi base station. Having this information allows the user to better understand system behaviour - for example in the event that the users talks on the phone and the speech quality gets worse after entering a building. Looking at the bars indicating the network strength, the user may realize that the coverage is inadequate. As you have a mental model of the problem and its solution, you move towards a window or back towards the door to regain a satisfactory signal quality. The very basic idea of sensor-based context awareness is the assumption that similar situations (considered as one context) are represented by similar stimuli. Therefore, sensors may be used to determine contexts based on the assumption that in similar contexts the sensory input of the characterizing features is similar. The difficult part is to assess and define what the relevant and characterizing features are. As humans we do this in our everyday activities over and over again. We realize there is meeting in progress when entering a room with people sitting around a table talking - even if we do not know the room and anyone taking part in the meeting. The basic approach is to make an (implicit) analysis of the sensory input received from the surroundings and compare this to situations experienced earlier. Let us assume there is an evening meeting at the University of Stuttgart in the first floor meeting room of the SimTech building, and furthermore that there is a meeting in Lancaster in the InfoLab on the second floor meeting room - both at 10am. Let’s compare sensory readings for these two situations and add two further situations: A student lab session in Stuttgart, and cleaning of the meeting room in Lancaster.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.12: Phones provide very simple context-information in the user interface. In this picture the phone has connectivity to the GSM-network as well as to a WiFi base station. Having this information allows the user to better understand system behaviour - for example in the event that the users talks on the phone and the speech quality gets worse after entering a building. Looking at the bars indicating the network strength, the user may realize that the coverage is inadequate. As you have a mental model of the problem and its solution, you move towards a window or back towards the door to regain a satisfactory signal quality. The very basic idea of sensor-based context awareness is the assumption that similar situations (considered as one context) are represented by similar stimuli. Therefore, sensors may be used to determine contexts based on the assumption that in similar contexts the sensory input of the characterizing features is similar. The difficult part is to assess and define what the relevant and characterizing features are. As humans we do this in our everyday activities over and over again. We realize there is meeting in progress when entering a room with people sitting around a table talking - even if we do not know the room and anyone taking part in the meeting. The basic approach is to make an (implicit) analysis of the sensory input received from the surroundings and compare this to situations experienced earlier. Let us assume there is an evening meeting at the University of Stuttgart in the first floor meeting room of the SimTech building, and furthermore that there is a meeting in Lancaster in the InfoLab on the second floor meeting room - both at 10am. Let’s compare sensory readings for these two situations and add two further situations: A student lab session in Stuttgart, and cleaning of the meeting room in Lancaster.

| [Situation] | ||||

| [Feature] | Meeting Stuttgart | Meeting Lancaster | Lab Session | Cleaning |

| Geographic location: | Stuttgart | Lancaster | Stuttgart | Lancaster |

| Light on or off: | light on | light off | light off | light on |

| Number of people in the room: | 7 | 9 | 8 | 1 |

| Language spoken: | German | English | German | None |

| Activity in the room: | sitting | sitting | sitting | movement |

| Power consumption in the room: | 2kw | 1,6kw | 3,4kw | 3kw |

| Devices in use: | laptop, phone | projector, laptop | laptop, phone | vacuum cleaner |

Table 14.1: Example of situations and their characterizing features If we create a matrix in which we count how many features are the same, we arrive at the results in Table 2. Using this feature set, we see that the Meeting in Stuttgart is more similar to a Lab Session than to another meeting in Lancaster. If we would choose another set of features, we would get different similarities. This illustrates how important it is to choose the right features for classification. It is important to find the specific features for a context, and in many cases adding further features may be counter-productive.

| [Similarity] | Meeting in S | Meeting in L | Lab Session | Cleaning |

| Meeting in S | 7 | 1 | 4 | 1 |

| Meeting in L | 1 | 7 | 2 | 1 |

| Lab Session | 4 | 2 | 7 | 0 |

| Cleaning | 1 | 1 | 0 | 7 |

Table 14.2: Counting similar features for each pair of situations. It becomes clear that just counting any set of features is not going to work well. Choosing the “right” features that are characteristic is essential. The general approach is to look at which sensor input you expect in a certain context. In Table 3, two examples are given. A meeting is detected when several people are present and when these people interact. When the sensor information indicates an ongoing change in light and a certain audio level, as well as an indoor location where the user is stationary, we assume the user is watching TV. The examples show that the expected sensor readings are related to a feature space, described in Figure 10. Looking at these descriptions of the expected sensor input, it is apparent that the detection is never perfect. It is easy to create situations that are not a meeting, but classified as a meeting (e.g. having lunch together is likely to be classified as a meeting with the description below). Similarly, we can create a situation that belongs to the context, but is not recognized with the expected sensor input. If the user watches TV while in the garden and perhaps even uses subtitles and has the sound switched off, this would not be recognized. Such descriptions can be made on very different abstraction levels (e.g. people are present vs. the passive infrared sensor indicating movement). The used descriptions are typically depending on the types of sensors assumed.

| Context | Expected Sensor Input |

| Meeting | Several people present Interaction between people |

| User watching TV | Light level/color is changing, certain audio level (not silent), type of location is indoors, user is mainly stationary |

Table 14.3: Illustrates example assumptions made for specific sensory inputs on two contexts Design Hint 3: Find parameters which are characteristic for a context you want to detect and find means to measure those parameters In current systems, a wide variety of sensors are used to acquire contextual information. Important sensors used are GPS (for location and speed), light and vision (to detect objects and activities), microphones (for information about noise, activities, and talking), accelerometers and gyroscopes (for movement, device orientation, and vibration), magnetic field sensors (as a compass to determine orientation), proximity and touch sensing (to detect explicit and implicit user interaction), sensors for temperature and humidity (to assess the environment), and air pressure/barometric pressure. There are also sensors to detect the physiological context of the user (e.g. galvanic skin response, EEG, and ECG). Galvanic skin response measures the resistance between two electrodes on the skin. The value measured is dependent on how dry the skin is. Typically, such measurements can be used to determine reactions that change the dryness of the skin, e.g. surprise or fear (lie detectors are based on similar mechanisms). In principle, one can use all types of sensors available on the market to feed the system with context information. In some applications, it may make sense to use more sensors of the same type to ease the context detection task. For example, to determine the number of speakers and locating their position in a room is straightforward with a set of microphones, whereas this is impossible with a single microphone. The quality of the information gained may also be improved by using a set of sensors rather than one. A simple example is that with a single light sensor one can only detect the light level in the environment, but a larger number of light sensors are the basis for a camera. To match sensory information with contexts, a matching has to be performed. These perception tasks are typically done by using means of machine learning and data mining. The simplest way is to describe a set of features that define a situation. Then, in any given situation, the system will monitor its sensory input and check if the features match the sensory input. Simple rule-based systems fall into this category. Another example is to record typical situations and calculate representative features for these situations. In a new situation, the features are calculated and compared to the learned (recorded) situations. With a simple so-called "nearest neighbour matching", the current context can then be calculated. The quality of the algorithms that calculate the contexts should be assessed to determine how well the system works. These algorithms can be optimized for precision or recall, similarly to classical information retrieval systems. When assessing context-aware systems, it is important to take the probability of a certain context into account; otherwise very rare events may be missed. Assume the following example: You want to build a fire alarm, and you assume that in 10,000 days you will have 1 day where there is a fire. If you pick up a stone from the ground and announce that it is a fire alarm, you can be pretty sure that it does not work as such. Nevertheless, you can still claim that your “fire alarm” will work in 99.99% of the time. However, when providing information for the context “fire (0%)” and the context “non-fire (100%)”, it becomes immediately obvious that a stone is not a useful device for this purpose. To assess this, a confusion matrix is used. It shows the relationship between the real situation and the perceived context for each context defined.

| Perceived context by the "system" | |||

| Fire | No Fire | ||

| Situation in the real world | Fire | 0 % | 100 % |

| No Fire | 0 % | 100 % | |

| Perceived context by the system | |||

| Fire | No Fire | ||

| Situation in the real world | Fire | 100 % | 0 % |

| No Fire | 0 % | 100 % | |

Table 14.5: Confusion matrix for an optimal fire alarm Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below. Figure 14.13: In order to know how well a context detector works, you need to know the recognition performance for each context. In comparison to an actual fire alarm, you most likely agree that a stone will not work well as a fire alarm.

These basic categories help in the design of context-based applications. In some cases there may not be a clear discrimination between them, or they may be combined in a single application. As early as in the original paper by Schilit et al (1994), a discussion and a table of how context can be used was included. They included a table where they discriminated between what is context-dependent (information or commands) on one side and how context is used (manually or automatically) on the other side. This view of context-aware applications mainly reflects the first and the last types from the above list, i.e. proactive applications and resource management.

It is highly recommended to read this paper by Bill Schilit et al (Schilit et al 1994) as it is the cornerstone and central foundation of context-aware computing. If you are interested in more details on the original work on context-awareness, you may want to read Bill Schilit's PhD thesis.